ConclusionĮlixir’s Task module allows you to write unbelievably clean concurrent code. It turns out a tiny change made a not-so-tiny impact on the performance of the function.

ELIXIR HTTP CLIENT FULL

More importantly though, call_apis took 1130ms, while call_apis_async took only 380ms, a full 750ms faster!

The Comparison section shows that the call_apis_async function is almost 3 times faster than the call_apis function. It’s clear from the results that there is a massive difference between the two functions. To check whether concurrency made any change at all to the performance, you can benchmark the functions with benchee.Īll you need to do is add benchee to deps in mix.exs (and run mix deps.get):ĬPU Information: Intel (R ) Core (TM ) i7-7700HQ CPU 2.80GHzĬall_apis_async 2.61 0.38 s ☒2.63% 0.37 s 0.55 sĬall_apis 0.89 1.13 s ☑3.08% 1.12 s 1.42 s

Benchmarking the two versions of the function

ELIXIR HTTP CLIENT CODE

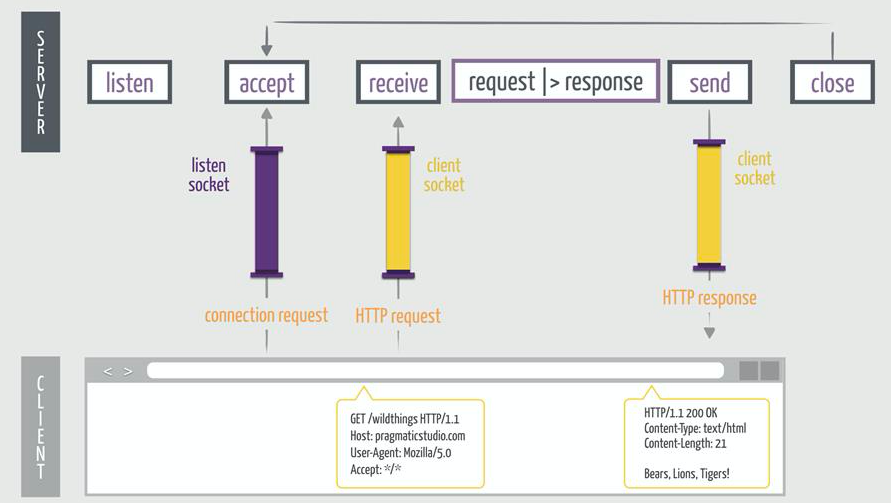

However, we should never assume that a code change improves performance, so let’s go ahead and benchmark the code to make sure. In fact, this looks like good ol' sequential code!Ĭould such a tiny change make any difference to the performance of the function?Īpplying Task.async_stream/3 should make each API call concurrently in a separate process, so theoretically there should be a performance improvement. No callback hell and no need for Promises. into(, fn -> res end)Īll I’ve done is replace the single call to Enum.map/2 with calls to Task.async_stream/3 and Enum.into/3. The most common use case for is to convert sequential code into concurrent code by computing a value asynchronously.įor this example, I think the most useful functions in Task are Task.async_stream/3 and Task.async_stream/5:ĭef call_apis_async() |> Task. While Elixir allows you to very easily spawn processes with functions like Kernel.spawn_link/1, you’re much better off using the awesomely powerful abstractions provided by the Task module: One of the biggest selling points of Elixir/Erlang is that the BEAM VM is able to spawn “processes” and execute code concurrently inside them. The sequential code takes as long as all the requests combined, as they are executed one after the other. In theory, we could make this function significantly faster if we initiate all the requests at the same time and return once all of them are complete.Īs the image above shows, in an ideal case, the concurrent code should only take as long as the longest single request. If each request is not dependent on the result of another, chances are we are wasting a lot of time by not making the requests concurrently. This code is makes each request sequentially: it will wait until each request has finished before making the next request. The call_apis function is going to map over each url in and make a HTTP request to each one with HTTPoison.get/1.